Nebula Originals

TLDR: We’re going big on Nebula Originals, and we’ve hired a new chief content officer to help make it happen.

Nebula began life as a joint venture between Standard, a talent management company, and the group of creators we represent. The partnership is simple: the creators provide streaming licenses, and we provide the resources to build the platform itself. We split the profits equally, and if we ever sell Nebula, we split the proceeds evenly.

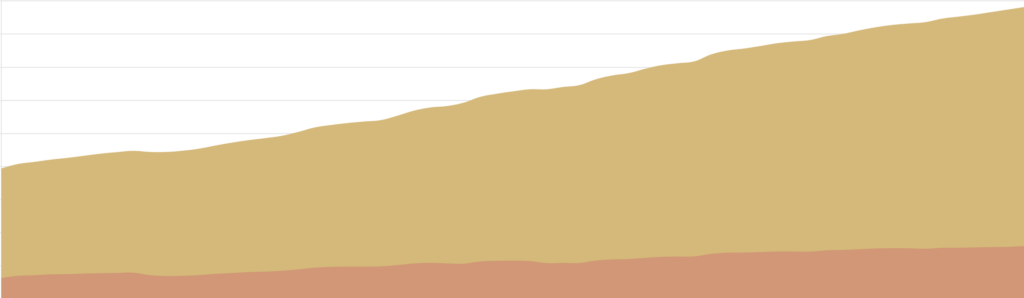

Our build mode energy has been well-spent. Four years after launch, we have apps available for iPhone, iPad, web, Android, Apple TV, Roku, Android TV, Samsung, and LG. We launched our own API, built a custom streaming backend, and added support for classes and podcasts. As a platform, Nebula is fairly built. As we look at the horizon, where do we put our energy to keep up our half of the bargain?

Earlier this year I talked about Snow Leopard, our plan to spend 2023 focusing on refinements and bug fixes rather than major new features. By June we had seen so much progress in our thinking and so much benefit to the organization as a whole that we realized Snow Leopard isn’t a one-year project, it’s a way of life. This needs to be our new normal. We’ll still add features, of course — our recommendations system is in development and already helping us to understand new ways to help viewers find things they’d enjoy. Our work is very, very far from complete and we have a long roadmap in front of us, but there’s an argument to be made that our build mode phase is over for the platform. Nebula as a piece of software is much more in run mode.

Where will we put that build mode energy? Nebula Originals.

For our first few years, Nebula has primarily been a creator streaming service, not a content streaming service. Our focus has been on creating a wonderful home for all of the things our creators make. As a bootstrapped independent company, we don’t have billions of dollars to throw at content development; our experiments have largely been smaller-budget projects that tackle a more adult subject matter, or break the creator’s typical format. The few times we’ve really swung for the fences, though, the results have been extremely compelling. Night of the Coconut, The Prince, and even Jet Lag were designed to get a little more ambitious and see what happened.

What happened is that the audience has started to associate us with specific Originals as much as specific creators. There’s an element of prestige in this projects, which grants Nebula itself an air of prestige. We think this is the most compelling future for Nebula.

Over the last few months we’ve completely redesigned and re-implemented our Nebula Originals development process. That process, we feel, should be creator-led, so we’ve turned to someone with an excellent track record for successfully developing and executing a variety of formats: Sam Denby, of Wendover, Half as Interesting, Extremities, and Jet Lag fame. (He didn’t have enough to do.) Sam has joined the team as our chief content officer to lead Nebula Originals development, aided by both our internal team and by the team at Wendover Productions.

This is a seismic shift for us. While we’re slowing the pace of growth for the software team, we’re not stopping entirely, and this change won’t cause money to magically appear in the hands of the content development team. This will be a steady shift across the back half of this year, with the real gains coming in 2024. We hope to use the development process itself to generate enthusiasm for projects by announcing them with more fanfare and much earlier. (We’re also looking at ways for the audience to directly impact budgets for given projects, but more on that later.)

We’re actively soliciting pitches from our creators and a select few outside friends. The team has crafted a pitch guide, and built a system to offer one-on-one development support for folks who have never pitched a show to a network or streamer before. The new process is in full swing, and we’ve already heard some genuinely bonkers ideas that I can’t wait to help make into reality. Expect to hear more soon, as we begin to announce projects in development and build out our slate for Q4 and 2024.

Nebula creators are an immensely talented group of people, and we want Nebula to not just be a home for what they make, but an epicenter of creative and professional growth, empowering and enabling them to create things above and beyond what’s possible on public, user-generated platforms.

The creators built Nebula. Now it’s time for Nebula to build the creators.